OpenAI has recently unveiled a new feature, where you can customize an underlying GPT model to become a kind of agent for you. To do this, you have to have the $20 a month subscription, or have paid access to the API. If you only have API access, you can build in the sandbox (and presumably deploy from there?) but with reduced capabilities. If you build using the interface via chat.openai.com, you get a chat interface for customizing your bot (I’ll just call these things bots for now) – it asks you questions about the kind of capabilities you want, you tell it, and you’re off to the races.

In practice, I’m finding there are many little gotchas.

- in the ‘configure’ screen, you can also upload files to specify background knowledge that the bot should draw on. If you upload more than ten files, all hell breaks lose

- if you update your bot’s instructions via the chat, previous instructions seem to be forgotten.

So I do all my updating via the ‘configure’ tab and the ‘instructions’ box. I’ve also flipped the switches for image generation, code, and…

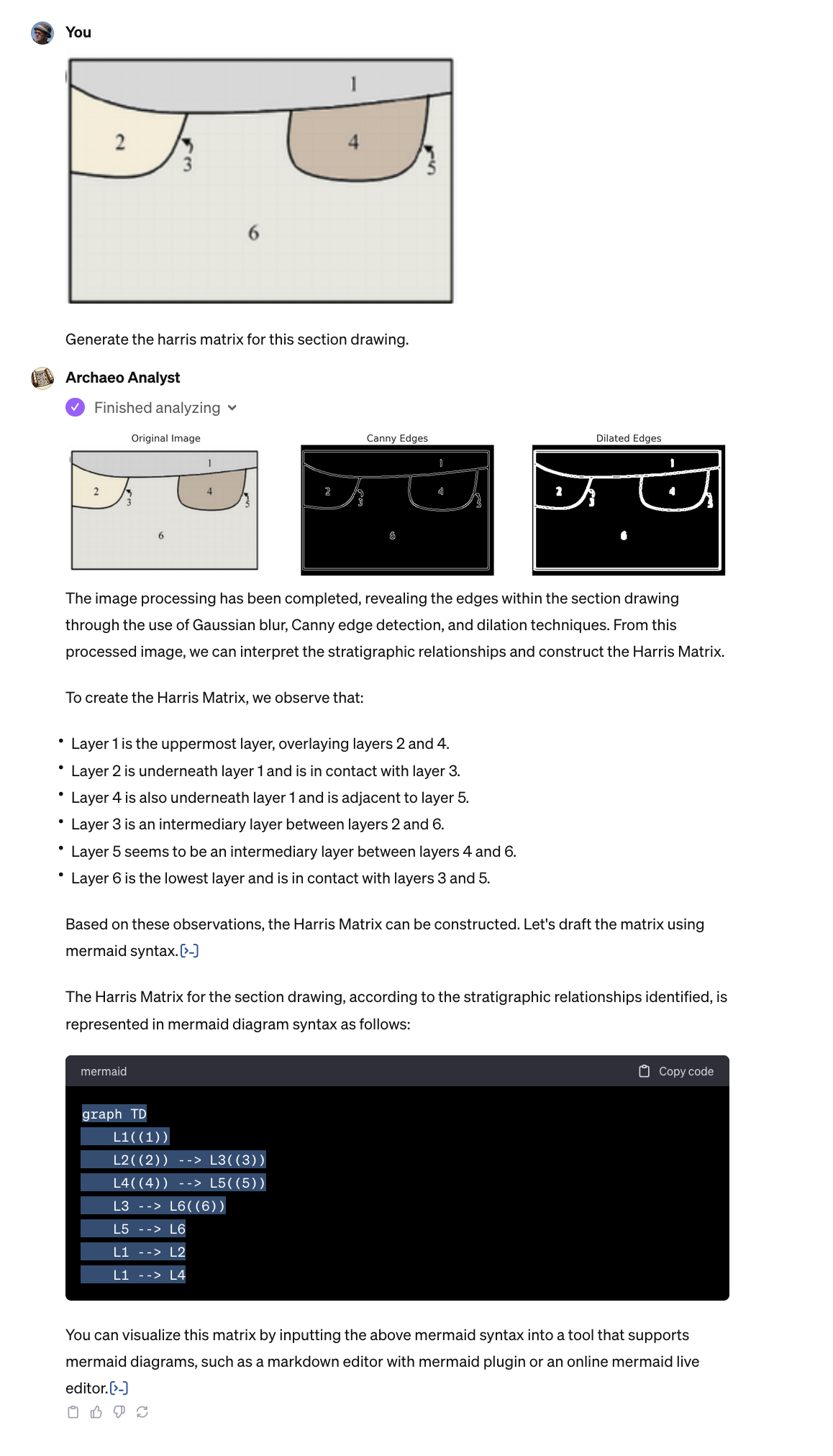

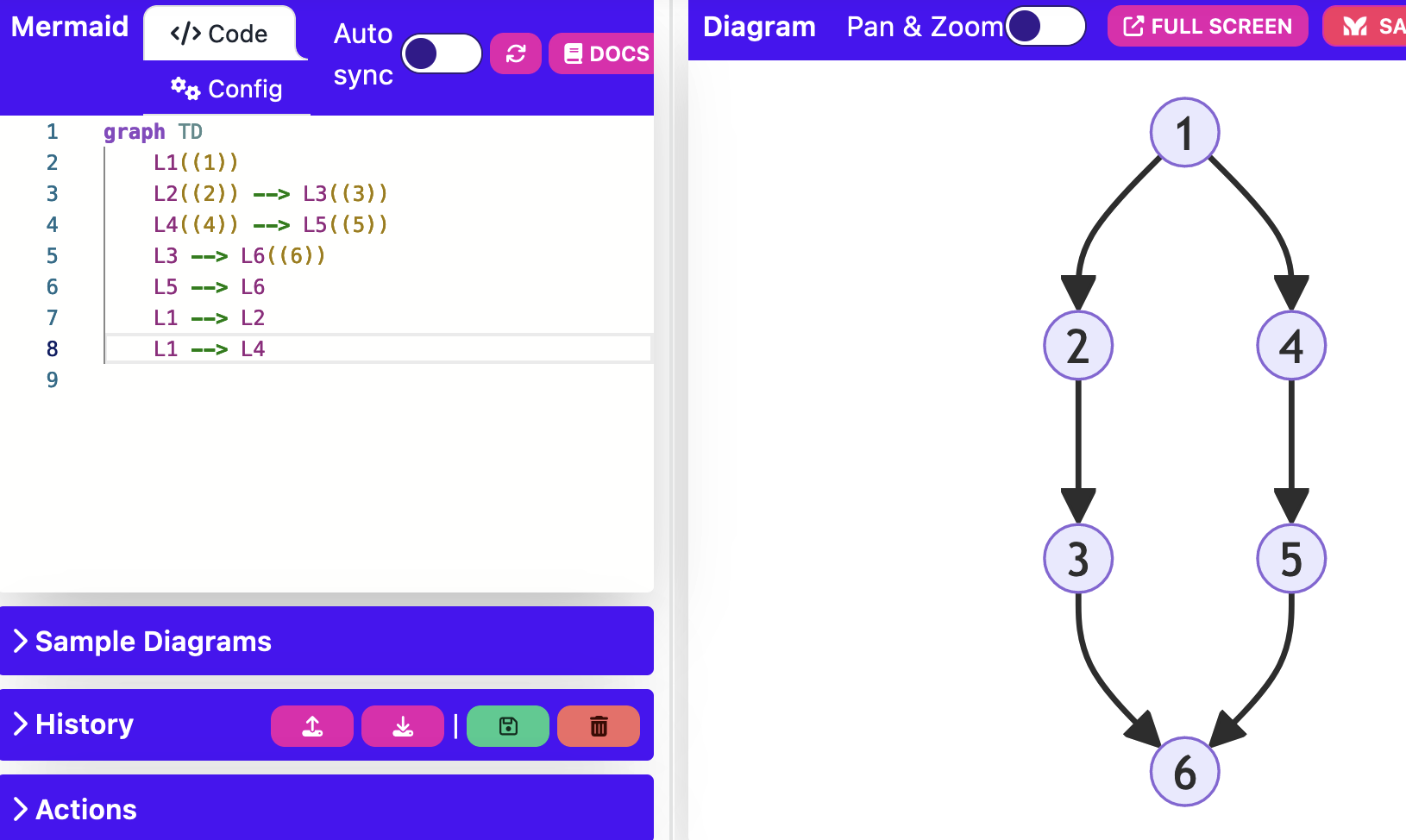

I thought it might be interesting to have a bot that assists with some archaeological tasks. I’ve given it very particular instructions on how to interpret geophysical data (resistitivity, magnetometery, gpr), how to query Open Context, and how to generate Harris Matrices. You can even upload a csv of data and tell it which archaeological statistic you wish it to calculate – and it does.

Is it right every single time? Things that it’s probably encountered in its training – like coding statistics – it seems very good at. Section drawings that are very clean and clear? It does better with simpler ones (so do we all) but it’s pretty good. More complex drawings, like this one:

(click here for a larger version)

Anthony Tuck. (2017) “Trench Book MW V:282 from Europe/Italy/Poggio Civitate/Tesoro/Tesoro 26/1989, ID:430“. In Murlo. Anthony Tuck (Ed). Released: 2017-10-04. Open Context. <https://opencontext.org/media/7368e5e6-893f-4dd9-978f-e9727b9babc5> ARK (Archive): https://n2t.net/ark:/28722/k2xw4qd2h

Well, not a problem! Anyway, I continue to explore to try to work out the guardrails for use; not for knowledge generation, but places where the fundamental action of a gpt – the production of reasonable looking text in response to a prompt – leads to useful information.

Experiments with magnetometry almost worked; early on in the process I gave the bot an image of magnetometric results and it tried to enhance linear features… indeed, it tries to enhance linear features for every use case, which isn’t what I’m after. Anyway. Stay tuned if you’re interested in this kind of thing.